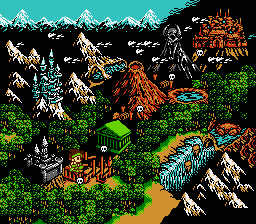

Remember my old PAVC idea? I have been thinking about it again. As a refresher, this idea concerns efficiently and losslessly compressing RGB video frames output from an emulator for early video game systems such as the Nintendo Entertainment System (NES) and the Super NES. This time, I have been considering backing off my original generalized approach and going with a PPU-specific approach. (PPU stands for picture processing unit, which is what they used to call the video hardware in these old video games systems.) Naturally, I would want to start this experiment (again) with my favorite — nay — the greatest video game console of all time, the NES. Time for more obligatory, if superfluous, NES screenshots.

Little Samson, all-around awesome game

Here’s the pitch: Modify an emulator (I’m working with FCE-Ultra) to dump PPU data to a file. Step 2 is to take that data and run it through a compression tool. What kind of data would I care about for step 1? On the first frame, dump out all of the interesting areas of the PPU memory space. This may sound huge, but it is only about 9-12 kilobytes, depending on the cartridge hardware. Also, dump the initial states of a few key PPU registers that are mapped into the CPU’s memory space. As the game runs, watch all of these memory and register values and log changes. This really isn’t as difficult as it sounds since FCEU already cares deeply when one of these values changes. When something changes, mark it as “dirty” and dump that value during the next scanline update.

With that data captured, the next challenge is to compress it. I am open to suggestions on how best to encode this change data. I would hope that we could come up with something a little better than shoving a frame of change data through zlib.

Decompression and playback would entail unraveling whatever was performed in step 2 above. Then, the decoder simulates the NES PPU by drawing scanline by scanline, and applying state change data between scanlines.

What are the benefits to this approach? Ideally, I am aiming not only for lossless compression, but for better compression than what is ordinarily achieved with the large files distributed via BitTorrent and coordinated at tasvideos.org. When I first started investigating this idea over 2 years ago, MPEG-4 variants were still popular for compressing the videos. These days, H.264 seems to have taken over, which performs much better, even on this type of data (allegedly, H.264’s 4×4 transform allows for lower artifacts on sharp edge data such as material from old video game consoles).

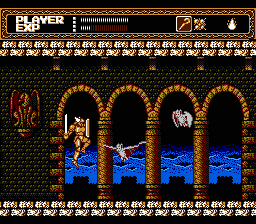

Sword Master, mediocre game with great graphics

There are also some benefits from the perspective of NES purists. The most flexible NES emulators allow the user to switch palettes in order to get one that is “just right”. A decoder for this type of data could offer the same benefits.

Of course, an encoder is not much use without an analogous decoder that end users can easily install and use. I think this is less of an issue due to the possibility of creating a decoder in Flash or Java.

I think even ZMBV may be good here and IIRC there is one console emulator (not DosBox) that compresses captured video with it.

In general, I’d like to see more generic video codec, not NES video system emulator (tasvideos.org has recorded videos from other consoles as well).

A PPU emulator might be interesting as a starting point for a console-agnostic codec, but I don’t see it having any use by itself. If you just want maximally compressed lossless videos of a single console, then take a whole NES emulator, not just the PPU, and record the user’s button presses. Movie size: the ROM + a few bits per frame. Many emulators already support this kind of recording and playback.

An alternative approach: define a virtual machine for graphics operations and map the functionality of the original PPU on it. The encoded stream would contain the VM instructions and the data it used.

Some operations that would have a place in such a VM:

– define a sprite

– place a sprite on a screen (with sub-functions for colour-keyed and AND-masked blit)

– perform a colour transformation (like a palette change) on a screen, sprite, or a region on a screen

– page flipping

– horizontal and vertical scroll

On platforms which don’t natively work with sprites, an encoder could try to translate the changes to the screen’s pixel data to such operations.

@Kostya: ZMBV would be useful to evaluate in this context, especially since it did not exist when I first brainstormed this stuff over 2 years ago.

@pengvado: That approach works, and indeed, is already widespread. I am hoping for an approach that doesn’t require the copyrighted ROM file.

@Svdb: All good ideas. However, it tends to take a frame-based view on the problem (i.e., rendering an entire frame based on the PPU state at the end of a frame). That will work for many games. However, for the coolest 10% of games, the rendering model needs to work on a per-scanline basis (PPU state can be updated during horizontal retrace for effects like split-screen scrolling on the NES).

Hmm, I was just reviewing the ZMBV description and it seems rather plausible that PPU state data could be leveraged for hints in creating optimal ZMBV encodings.

I think a general compressor (which could be VM-like) with knowledge of palettes and some sense of scanline-state (and especially palette-updating-on-scanlines, since I think most everything else can be handled with a normal mechanism) would still be a lot more useful, since it’s more general (not tied to a specific console) and the coder and decoder can be defined in terms of each other so there’s no concern over dependency on bugs in an emulator.

Generally, the way these screens work is by display sprite shapes which are stored in off-screen memory. Thus it might seem plausible to embed that data explicitly and then refer to it, simulating the PPU, but I think you could get the vast, vast majority of the compression with a general-purpose lossless compressor that can do a few things your generic lossy video compressor can’t: refer back to arbitrary data from arbitrary previous frames (clamped to some maximum, presumably), and refer to data elsewhere on the same frame (so when an image contains repeating tiles, you can refer to them).

Another important element is that your classic codec leaves the blocks fixed and then has the references to previous blocks to copy from have sub-block deltas. But when scrolling image tiles, you want the opposite (in some sense): if a particular tile is animating, you want to just update that tile, so while it scrolls you want to update that misaligned block; if you have to update aligned blocks it will touch more than one.

I would guess you probably want an explicit ‘background’ and ‘foreground’ plane, where the background is the stuff that’s recognized as scrolling. That way when foreground stuff updates, you can just “alpha” mask it in without worrying about explicitly representing regions that contain partially foreground and partially background.

Ok, it gets more subtle than foreground/background with parallax scrolling. Also, mode 7 oops.

@Multimedia Mike: Doing updates during the retrace time to divide the screen into regions, could be fit in into my approach by allowing operations on regions of the screen.

Then you add instructions like “define a region”, and “scroll region”, “change palette on region”.

I guess some scaling would be involved if the resolution can change during retrace (does that ever happen?), though that would be an issue regardless of the approach you take.

If updates during the retrace period are used for other purposes than subdividing the screen — for instance to add more colour at the cost of a lower resolution, by switching mode each line — you’d have a different situation. But as your output doesn’t have to be limited to the same colours as the original screen, you can join the capabilities of the different modes used together.

Or you can just handle the screen as having one region for each line.

Defining regions could have other purposes, like to process things like the wavy effect you get when you enter combat in games like Final Fantasy (iirc). You could define a region to be one line, and then scroll per region/line.

Mixed graphics/text mode games would be an interesting case, btw.