When you are a certain type of nerd who has been on the internet for long enough, you might run the risk of accumulating a lot of projects and websites. Website-wise, I have this multimedia.cx domain on which I host a bunch of ancient static multimedia documents as well as this PHP/MySQL-based blog. Further, there are 3 other PHP/MySQL-based blogs hosted on subdomains. Also, there is the wiki, another PHP/MySQL web app. A few other custom PHP- and Python-based apps are running around on the server as well.

While things largely run on auto-pilot, I need to concern myself every now and then with their ongoing upkeep.

If you ask N different people about the meaning of the term ‘DevOps’, you will surely get N different definitions. However, whenever I have to perform VM maintenance, I like to think I am at least dipping my toes into the DevOps domain. At the very least, the job seems to be concerned with making infrastructure setup and upgrades reliable and repeatable.

Even if it’s not fully automated, at the very least, I have generated a lot of lists for how to make things work (I’m a big fan of Trello’s Kanban boards for this), so it gets easier every time (ideally, anyway).

Infrastructure History

For a solid decade, from 2004 to 2014, everything was hosted on shared, cPanel-based web hosting. In mid-2014, I moved from the shared hosting over to my own VPSs, hosted on DigitalOcean. I must have used Ubuntu 14.04 at the time, as I look down down the list of Ubuntu LTS releases. It was with much trepidation that I undertook this task (knowing that anything that might go wrong with the stack, from the OS up to the apps, would all be firmly my fault), but it turned out not to be that bad. The earliest lesson you learn for such a small-time setup is to have a frontend VPS (web server) and a backend VPS (database server). That way, a surge in HTTP requests has no chance of crashing the database server due to depleted memory.

At the end of 2016, I decided to refresh the VMs. I brought them up to Ubuntu 16.04 at the time.

Earlier this year, I decided it would be a good idea to refresh the VMs again since it had been more than 3 years. The VMs were getting long in the tooth. Plus, I had seen an article speculating that Azure, another notable cloud hosting environment, might be getting full. It made me feel like I should grab some resources while I still could (resource-hoarding was in this year).

I decided to use 18.04 for these refreshed VMs, even though 20.04 was available. I think I was a little nervous about 20.04 because I heard weird things about something called snap packages being the new standard for distributing software for the platform and I wasn’t ready to take that plunge.

Which brings me to this month’s VM refresh in which I opted to take the 20.04 plunge.

Oh MediaWiki

I’ve been the maintainer and caretaker of the MultimediaWiki for 15 years now (wow! Where does the time go?). It doesn’t see a lot of updating these days, but I know it still serves as a resource for lots of obscure technical multimedia information. I still get requests for new accounts because someone has uncovered some niche technical data and wants to make sure it gets properly documented.

MediaWiki is quite an amazing bit of software and it undergoes constant development and improvement. According to the version history, I probably started the MultimediaWiki with the 1.5 series. As of this writing, 1.35 is the latest and therefore greatest lineage.

This pace of development can make it a bit of a chore to keep up to date. This was particularly true in the old days of the shared hosting when you didn’t have direct shell access and so it’s something you put off for a long time.

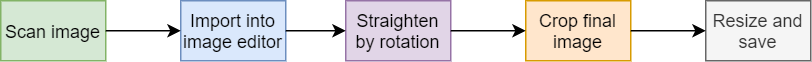

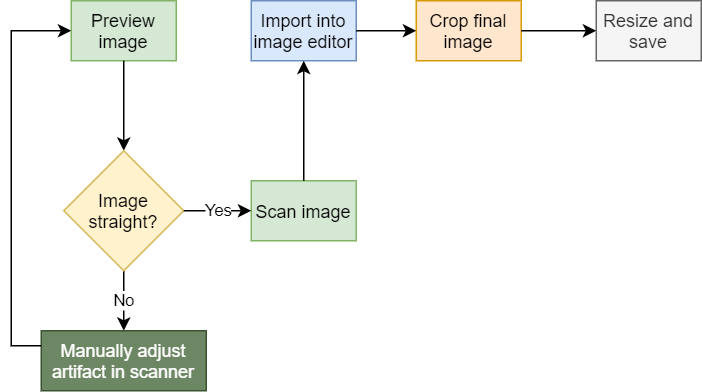

Honestly, to be fair, the upgrade process is pretty straightforward:

- Unpack a set of new files on top of the existing tree

- Run a PHP script to perform any database table upgrades

Pretty straightforward, assuming that there are no hiccups along the way, right? And the vast majority of the time, that’s the case. Until it’s not. I had an upgrade go south about a year and a half ago (I wasn’t the only MW installation to have the problem at the time, I learned). While I do have proper backups, it still threw me for a loop and I worked for about an hour to restore the previous version of the site. That experience understandably left me a bit gun-shy about upgrading the wiki.

But upgrades must happen, especially when security notices come out. Eventually, I created a Trello template with a solid, 18-step checklist for upgrading MW as soon as a new version shows up. It’s still a chore, just not so nerve-wracking when the steps are all enumerated like that.

As I compose the post, I think I recall my impetus for wanting to refresh from the 16.04 VM. 16.04 used PHP 7.0. I wanted to upgrade to the latest MW, but if I tried to do so, it warned me that it needed PHP 7.4. So I initialized the new 18.04 VM as described above… only to realize that PHP 7.2 is the default on 18.04. You need to go all the way to 20.04 for 7.4 standard. I’m sure it’s possible to install later versions of PHP on 16.04 or 18.04, but I appreciate going with the defaults provided by the distro.

I figured I would just stay with MediaWiki 1.34 series and eschew 1.35 series (requiring PHP 7.4) for the time being… until I started getting emails that 1.34 would go end-of-life soon. Oh, and there are some critical security updates, but those are only for 1.35 (and also 1.31 series which is still stubbornly being maintained for some reason).

So here I am with a fresh Ubuntu 20.04 VM running PHP 7.4 and MediaWiki 1.35 series.

How Much Process?

Anyone who decides to host on VPSs vs, say, shared hosting is (or ought to be) versed on the matter that all your data is your own problem and that glitches sometimes happen and that your VM might just suddenly disappear. (Indeed, I’ve read rants about VMs disappearing and taking entire un-backed-up websites with them, and also watched as the ranters get no sympathy– “yeah, it’s a VM; the data is your responsibility”) So I like to make sure I have enough notes so that I could bring up a new VM quickly if I ever needed to.

But the process is a lot of manual steps. Sometimes I wonder if I need to use some automation software like Ansible in order to bring a new VM to life. Why do that if I only update the VM once every 1-3 years? Well, perhaps I should update more frequently in order to ensure the process is solid?

Seems like a lot of effort for a few websites which really don’t see much traffic in the grand scheme of things. But it still might be an interesting exercise and might be good preparation for some other websites I have in mind.

Besides, if I really wanted to go off the deep end, I would wrap everything up in containers and deploy using D-O’s managed Kubernetes solution.