People talk about building a PC from scratch. I’ve been doing this very thing for a long time. But those who have gotten into it in recent years may not grasp how the process has changed. I’ve been meaning to dash off this post for awhile in order to illustrate how “building a PC from scratch” has evolved over time.

Mostly, this is a function of how more and more stuff has become integrated onto the motherboard. Back in the day, the motherboard was the thing that tied together a bunch of interchangeable components. Most of those components are integrated onto the motherboard these days. And that’s for a PC. More and more, computing means “devices”, i.e., all-in-1 integrated machines such as phones, tablets, and increasingly fully integrated laptops (meaning that nothing inside can be swapped or upgraded).

This also has implications for retail, notably in the case of the beloved Fry’s Electronics franchise (RIP).

Building In 1995

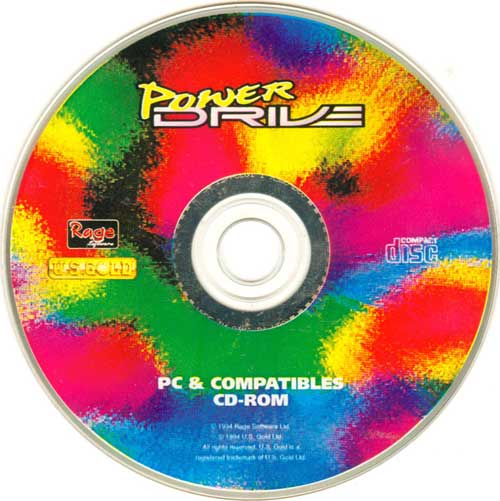

It was 30 years ago this semester (fall 1995) that I left home for university studies. In advance of doing so, I built my own computer. My family had a series of 3 different PCs from 1984 up until this point. As I type this, it feels strange to reflect on the “1 computer per family, at most” paradigm of yesteryear. The PCs were 8088, 286, and 486SX PCs, respectively. When I built my computer, I still remember all the individual types of parts I collected for assembly, even if I don’t remember the exact specs or brands: