I just can’t stop living in the past. To that end, I’ve been playing around with the Game Music Emu (GME) library again. This is a software library that plays an impressive variety of special music files extracted from old video games.

I have just posted a series of GME tools and associated utilities up on Github.

Clone the repo and try them out. The repo includes a small test corpus since one of the most tedious parts about playing these files tends to be tracking them down in the first place.

Players

At first, I started with trying to write some simple command line audio output programs based on GME. GME has to be the simplest software library that it has ever been my pleasure to code against. All it took was a quick read through the gme.h header file and it was immediately obvious how to write a simple program.

First, I wrote a command line tool that output audio through PulseAudio on Linux. Then I made a second program that used ALSA. Guess what I learned through this exercise? PulseAudio is actually far easier to program than ALSA.

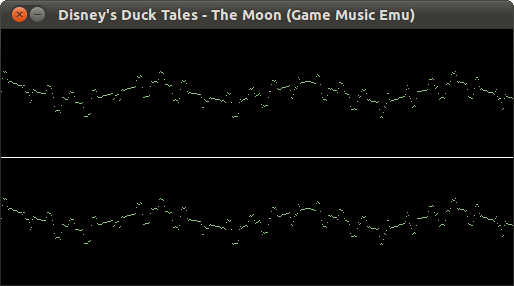

I also created an SDL player, seen in my last post regarding how to write an oscilloscope. I think I have the A/V sync correct now. It’s a little more fun to use than the command line tools. It also works on non-Linux platforms (tested at least on Mac OS X).

Utilities

I also wrote some utilities. I’m interested in exporting metadata from these rather opaque game music files in order to make them a bit more accessible. To that end, I wrote gme2json, a program that uses the GME library to fetch data from a game music file and then print it out in JSON format. This makes it trivial to extract the data from a large corpus of game music files and work with it in many higher level languages.

Finally, I wrote a few utilities that repack certain ad-hoc community-supported game music archives into… well, an ad-hoc game music archive of my own device. Perhaps it’s a bit NIH syndrome, but I don’t think certain of these ad-hoc community formats were very well thought-out, or perhaps made sense a decade or more ago. I guess I’m trying to bring a bit of innovation to this archival process.

Endgame

I haven’t given up on that SaltyGME idea (playing these game music files directly in a Google Chrome web browser via Google Chrome). All of this ancillary work is leading up to that goal.

Silly? Perhaps. But I still think it would be really neat to be able to easily browse and play these songs, and make them accessible to a broader audience.