As briefly mentioned in my last Theora post, I think FFmpeg’s Theora decoder can exploit multiple CPUs in a few ways: 1) Perform all of the DC prediction reversals in a separate thread while the main thread is busy decoding the AC coefficients (meanwhile, I have committed an optimization where the reversal occurs immediately after DC decoding in order to exploit CPU cache); 2) create n separate threads and assign each (num_slices / n) slices to decode (where a slice is a row of the image that is 16 pixels high).

So there’s the plan. Now, how to take advantage of FFmpeg’s threading API (which supports POSIX threads, Win32 threads, BeOS threads, and even OS/2 threads)? Would it surprise you to learn that this aspect is not extensively documented? Time to reverse engineer the API.

I also did some Googling regarding multithreaded FFmpeg. I mostly found forum posts complaining that FFmpeg isn’t effectively leveraging however many n cores someone’s turbo-charged machine happens to present to the OS, as demonstrated by their CPU monitoring tool. Since I suspect this post will rise in Google’s top search hits on the topic, allow me to apologize to searchers in advance by explaining that multimedia processing, while certainly CPU-intensive, does not necessarily lend itself to multithreading/multiprocessing. There are a few bits here and there in the encode or decode processes that can be parallelized but the entire operation overall tends to be rather serial.

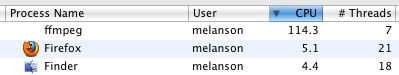

So this is the goal:

…to see FFmpeg break through the 99.9% barrier in the CPU monitor. As an aside, it briefly struck me as ironic that people want FFmpeg to use as much of as many available CPUs as possible but scorn the project from my day job for being quite capable of doing the same.

Moving right along, let’s see what can be done about exploiting what limited multithreading opportunities that Theora affords.

First off: it’s necessary to explicitly enable threading at configure-time (e.g., “–enable-pthreads” for POSIX threads on Unix flavors). Not sure why this is, but there it is.

Next: FFmpeg apparently allocates a thread pool during initialization based on the -threads command line parameter. Codecs can exploit these threads by loading up an array of thread-specific context data structures and calling AVCodecContext::execute() with the context array, the number of threads to use, and a function to execute in each of those threads.

Here is a working, simple example of a thread context data structure and a thread function (works circa FFmpeg SVN 20000):

Then, in some function from the main thread:

And here is the relevant output using ‘-threads 4’:

[theora @ 0x1004000]there are 4 threads available

[theora @ 0x1004000]sending work to the threads and waiting…

[theora @ 0x1004000]sleeping for 3 seconds…

[theora @ 0x1004000]sleeping for 2 seconds…

[theora @ 0x1004000]sleeping for 5 seconds…

[theora @ 0x1004000]sleeping for 5 seconds…

[theora @ 0x1004000]done sleeping for 2 seconds

[theora @ 0x1004000]done sleeping for 3 seconds

[theora @ 0x1004000]done sleeping for 4 seconds

[theora @ 0x1004000]done sleeping for 5 seconds

[theora @ 0x1004000]thread 0 returned 2

[theora @ 0x1004000]thread 1 returned 3

[theora @ 0x1004000]thread 2 returned 4

[theora @ 0x1004000]thread 3 returned 5

Those “sleeping for n seconds…” lines are a bit suspicious. Could it be that av_log() is not thread-safe (i.e., reentrant)? The rest of the output seems to indicate that the threading is working as expected. At least, that’s my story and I’m sticking to it unless someone can kindly demonstrate that there’s a deeper bug. Fortunately, I don’t intend to do a lot of terminal output from my worker threads.

Emboldened by this simple experiment, I proceeded to multithread the VP3/Theora renderer. This is the relevant loop:

So the idea is to break that up into n threads where each thread renders (macroblock_height / n) slices. I almost have it working and it is measurably faster. Many frames seem to be correct but there are other frames that don’t pass my tests. So far, I have been performing optimization validation numerically rather than visually– I captured the framecrc output of 3 VP3/Theora files from before I started optimization work and have been comparing framecrc output after optimization. Some, but not all, of the CRCs aren’t passing when multithreaded.

Here’s a critical lesson I have learned about optimization: Don’t trust any profiling statistics until the code is actually correct. I’ve seen bugs go both for and against my favor in this respect. (I once had an optimization that mostly worked but was only marginally faster than the original code; when I ironed out a few minor bugs, it was more than 3x faster. On the other end of the spectrum, my first pass at this multithreaded renderer was impressively fast– until I noticed that it was only rendering the first 1/n part of the frame). With that in mind, I am seeing notable speedups with this multithreaded rendering. From the above CPU monitor screenshot, FFmpeg is using more than 1 CPU (and somehow spawns 7 threads with ‘-threads 2’). Decoding the first 60 seconds of the 1080p version of Big Buck Bunny went from 52.0s -> 47.7s (wall clock time) on my Core 2 Duo 2.0 GHz Mac Mini. Be advised that this doesn’t necessarily translate into realtime HD Theora playback. The command line I am using is:

ffmpeg -threads 2 -i big_buck_bunny_1080p_stereo.ogg -f yuv4mpegpipe -an -t 60 -y /dev/null

which decodes only the first minute of video (no audio) to raw data and dumps it directly to /dev/null.

So, when FFmpeg wants to multithread a program, it kicks off a set of homogenous functions in n threads (and the main thread blocks while the worker threads are busy). Is this the same spirit as functional programming? That’s a topic I have been wanting to study closer for a long time. FFmpeg’s model, however, makes it a bit difficult to follow through with my idea of processing the DC coefficient reversal in a separate thread after the quantized DC coefficients are decoded. It is possible that I could create 1 function to run in multiple threads that would do different things depending on the context data I pass in. I need to be sure that the threads don’t need to synchronize in any way since FFmpeg’s threading API has no provisions for that.

As an aside, it briefly struck me as ironic that people want FFmpeg to use as much of as many available CPUs as possible but scorn the project from my day job for being quite capable of doing the same.

Obviously, when you transcode you want to dedicate all resources to it. When you decode, you want lesser load on system. Especially in certain app case where there are usually some other task that should be done and some embedded player on web page eats all resources.

And, obligatory, http://xkcd.com/619/

You seem to try to create n workloads for n threads. This is almost certainly a bad idea, you should create as many separate workloads/tasks as possible (within reason, but one per slice is almost certainly within reason).

Why? For one, because it will lead to simpler and easier to test code (except for race conditions the code will always do the same no matter the number of threads), while due to FFmpeg using thread pools the overhead for additional tasks is almost 0.

But now for the real reason.

To make matters particularly clear, let us assume someone is using a two CPU (or two core) system with -threads 2. On one of the CPUs there is a antivirus scanner running, which being a good nice virus scanner uses up 75% of that CPUs processing power.

Now you split things into two parts, thus one thread will process half the image on the idle CPU and one will process the other half on the already 75% loaded CPU.

The thread on the previously idle CPU will finish after half the normal time, but the thread on the other CPU will only have handled 1/8 of a frame. Even if the scheduler immediately moves the other thread to the other CPU and assuming no issues due to caches, the overall runtime will be 1/2+3/8 = 7/8 (87.5 %) of the normal processing time.

Had you instead split the task into e.g. 100 independent slices, the first thread would have processed 80 and the second one 20, resulting in an overall time of only 80% of normal processing, about 9% faster, with simpler code to boot.

@Reimar: Sound theory. However, I didn’t have my cores loaded at the time so it wasn’t a factor when I did my initial tests. I retested with 17 threads to give a little more granularity in the BBB-1080p case but performance was a little worse on my dual core than with just 2 threads.

@Kostya: Thanks to the xkcd book tour this past week, I now own an autographed copy of that comic. :-)

Mike: not sure if I understood you right, but performance with 17 threads will likely be worse due to L1 and L2 thrashing by all those threads, this is not an issue with only 2 threads but more work units, which was my suggestion. This is one of the reasons why the “thread pool” approach is vastly superior to the “one thread per task” approach (in addition to issues like thread startup overhead and use of unswappable memory for in-kernel thread structures).

Maybe I should mention that many of the current implementations like dnxhdenc are counter-examples, i.e. how you’re not supposed to do it (well, they aren’t horrible, they are like what you were doing so far, but that approach is more complex and is at least as likely to be slower as it is likely to be faster).

Well that’s just great to know, Reimar, since libavcodec/dnxhdenc.c was my primary example for multithreading. :-)

You suggested one thread per task. The task is the rendering of an individual slice. There are 68 slices in a 1080p video and creating 17 tasks of 4 slices each moved us closer to the goal.

Great job ! I just wanted to thank you (Mike) for doing all these wonderful ffmpeg improvements. Thanks !

Another idea might be to have the worker threads query from a pool of available slices that still need to be processed. The problem there is that it requires some synchronization facilities which are not provided by FFmpeg’s threading API.

@Tom: Don’t get too excited. See my next post about how libtheora still wipes the floor with FFmpeg’s decoder.

Nooo! I did _not_ suggest 1 thread per task, I would suggest 1 thread per CPU _but_ more tasks than threads, actually as many tasks as possible (as long as less than several 1000).

And about your other idea: FFmpeg already implements that, that is exactly what I meant. FFmpeg does not provide those synchronization facilities because the framework already does the heavy lifting for you and you shouldn’t need to use them yourself.

I sent a patch for dnxhdenc to the FFmpeg list, I hope that should make it really clear what I meant :-)

I look forward to studying your patch because I really don’t understand what you’re driving at. :-)

There is one sillyness in ffmpeg’s thread pool functionality. The calling thread (ie the one providing the work items), goes idle, waiting for the work threads to finish, instead of joining in and doing work.

This aught to introduce a pointless context switch, and uneccessary overhead. I’m not sure how much it really matters, but i’ve always seen it as abit funky.

Another small note being that when the trigger thread signals worker threads to start, it is holding the protecting mutex. Thus depending on scheduling, the worker thread may wake up, and immidiatly go to sleep again trying to aquire the the mutex lock that the trigger thread is currently holding.

elupus: Huh? In the pthread implementation there is exactly one lock, and the lock must be held whenever waiting on conditionals, and for signaling conditions it is recommended by the pthread documentation for predictable scheduling behaviour.

Nevertheless there probably are some things that will need to be optimized for fine-grained parallelism, but so far we are talking about at most slice-level parallelism so it is unlikely to matter.

elpus: PThreads require you to be holding a mutex to wait for or signal a mutex. Any efficient implementation (E.G. Linux PThreads) will requeue all the waiting threads on the mutex rather than wake them. For the specifics of this mechanism, see the man page futex(2)

> There is one sillyness in ffmpeg’s thread pool functionality. The calling thread (ie the one providing the work items), goes idle, waiting for the work threads to finish, instead of joining in and doing work.

I think I posted a patch for this several years ago, but Michael didn’t approve it because he was afraid of any change to pthread code. It’s not a major problem anyway.

Theora is also multithreaded in ffmpeg-mt:

http://gitorious.com/~astrange/ffmpeg/ffmpeg-mt/commit/401a6bc7f0fe26963f63778c5092ae96c4262634

http://gitorious.com/~astrange/ffmpeg/ffmpeg-mt/commit/20997d60c8ec84dd0dd68055901e847c4b4e171a

The improvement wasn’t as good as MPEG-* (mostly since there’s no b-frames), but it’s still decent. I never benchmarked it past a dual-core, though.

Owen:

Asfar as I knew, there is no requirements to holding the mutex when signalling. There is when waiting obviously. Thou i’ve not read the pthread spec in detail on this. It does it’s required for predictable scheduling are required.

But you are probably right. If the implementation is smart it doesn’t wake the thread, but requeues it on the mutex instead.

> There are a few bits here and there in the encode or decode processes that can be parallelized but the entire operation overall tends to be rather serial.

You’d be surprised: x264, ffmpeg-mt, CoreAVC, and whatever DivX h.264 uses are powered by this marvelous thing called “frame-level multithreading”, which allows you to decode several frames at once (of course, having to take care of motion-compensation dependencies).

Sounds crazy, but how does it perform in practice? I took that “MV1_BRCM_D” sample you’re so fond of (note that it uses a single slice per frame) and run it in a 2GHz Core 2 Duo thingie:

CoreAVC, 1 thread: 63fps

CoreAVC, 2 threads: 114fps (81% speedup)

ffmpeg-mt, 1 thread: 51fps

ffmpeg-mt, 2 threads: 89fps (75% speedup)

x264, when encoding using high settings, can get into the 90%s easily, at basically no quality cost, without using slices.

I’ve heard some rumors of people breaking the 500fps barrier on Core i7s for 1080p videos, for a speedup factor of nearly 8 on this CPU with 8 physical cores handling two threads each.

So yeah, serial… within a frame :)

NB: I only have a passing familiarity with ffmpeg-mt but I suddenly became a lot more interested thanks to your comment. I suppose if the proper mt architecture were in place, the Theora decoding process could be broken into 2 phases: stream decode and reconstruction. Stream decoding of n successive frames would be highly parallelizable. Only the reconstruction phase needs to be serial due to requiring information from previous frames, and that can still be parallelized somewhat as outlined in this post.