Rewind to 1999. I was developing an HTTP-based remote management interface for an embedded device. The device sat on an ethernet LAN and you could point a web browser at it. The pitch was to transmit an image of the device’s touch screen and the user could click on the picture to interact with the device. So we needed an image format. If you were computing at the time, you know that the web was insufferably limited back then. Our choice basically came down to GIF and JPEG. Being the office’s annoying free software zealot, I was championing a little known up and coming format named PNG.

So the challenge was to create our own PNG encoder (incorporating a library like libpng wasn’t an option for this platform). I seem to remember being annoyed at having to implement an integrity check (CRC) for the PNG encoder. It’s part of the PNG spec, after all. It just seemed so redundant. At the time, I reasoned that there were 5 layers of integrity validation in play.

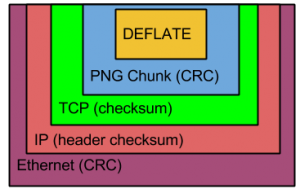

I don’t know why, but I was reflecting on this episode recently and decided to revisit it. Here are all the encapsulation layers of a PNG file when flung over an ethernet network:

So there are up to 5 encapsulations for the data in this situation. At the innermost level is the image data which is compressed with the zlib DEFLATE method. At first, I thought that this also had a CRC or checksum. However, in researching this post, I couldn’t find any evidence of such an integrity check. Further, I don’t think we bothered to compress the PNG data in this project long ago. It was a small image, monochrome, and transferring via LAN, so the encoder could get away with signaling uncompressed data.

The graphical data gets wrapped up in a PNG chunk and all PNG chunks have a CRC. To transmit via the network, it goes into a TCP frame, which also has a checksum. That goes into an IP packet. I previously believed that this represented another integrity check. While an IP frame does have a checksum, the checksum only covers the IP header and not the payload. So that doesn’t really count towards this goal.

Finally, the data gets encapsulated into an ethernet frame which has — you guessed it — a CRC.

I see that other link layer protocols like PPP and wireless ethernet (802.11) also feature frame CRCs. So I guess what I’m saying is that, if you transfer a PNG file over the network, you can be confident that the data will be free of any errors.

I expect you meant this jokingly, but:

Due to the very structured behaviour of CRCs, running a CRC over data that already includes a CRC will generally not improve error resilience at all, or if you are lucky only minimally.

So under that aspect several CRCs is just a waste of space, the only reason to have so many is because of the layering. It’s unfortunate but usually the only thing layering helps with is complexity, in most other aspects it tends to be quite wasteful.

The deflate compression format (RFC 1951) does not have a checksum. The zlib compressed data encapsulation (RFC 1950) is deflate data and ADLER32 checksum. The gzip compressed data encapsulation (RFC 1952) is deflate data and CRC32 checksum. Because Microsoft misunderstood the HTTP spec, they implemented zlib as raw deflate, and caused web server web browser compatibility problem, so the web uses gzip instead of zlib format veer since, wasting a few bytes and using a stronger checksum. Since then, a better checksum constant has been found and Intel implemented the new (incompatible) crc32c in hardware, making it free performance-wise. Newer data formats use that instead of the crc32 used in ethernet, gzip and zip files.

Note that calling CRC32 “stronger checksum” should be done with care, it can easily lead to misunderstandings.

CRCs have very nice properties that make it easy to prove things, and guarantee that certain kinds of errors will be detected. This is very useful for actual hardware that would be likely to produce these kind of errors.

However CRC32 in some aspects is actually decidedly weaker than alder32 in some contexts, e.g. if errors could be introduced due to software bugs. The reason for that is the CRC checksum over data that is already checksummed is always 0. As a result, any data corruption that replaces a CRC-checksummed part by a different CRC-checksummed piece of data will _never_ be detected.

So (assuming TCP and PNG use the same checksum) swapping two PNG chunks inside a single TCP packet would always go completely undetected.

Adler32 does not have this specific issue and thus actually is much better if there is a significant risk that this kind of error might occur.

Or to make it short: If you qualify checksums a “strong” or “weak” without considering at least either the potential inputs or errors to protect against you are doing it wrong.

The fact that integrity checks were present through so much of the storage networking stack, that the original Fibre Channel design omitted them as unnecessary latency.

@Ian: Interesting to learn. As I was writing this post, I wondered how various filesystems and physical storage mechanisms added to the integrity redundancy. I know a typical CD-ROM will have several layers of error checking.

I’m told that the Bluetooth stack positively drips with integrity checks, and that the volume of integrity-checking data has a not-insignificant impact on its throughput.

I think there might be some confusion.

CRC is indeed only error checking, however wireless protocols (bluetooth or ordinary wifi etc. doesn’t change that much I think) and data storage uses large amounts of error correcting codes.

They have the purpose of avoiding e.g. retransmitting large amount of data because of small errors or a small scratch damaging the CD/DVD permanently etc.

That usually means they only make sense on a physical layer where errors are actually expected, so they don’t suffer that often from being duplicated on each one.