It’s time to do a new compiler smackdown for a few reasons:

- It has been quite awhile since the last one.

- I received a request to know how icc 11.1 measured up.

- I wanted an excuse to post a picture of the GCC cheerleaders.

For this round, I tested x86_64 on my Core 2 Duo 2.0 GHz. I compiled FFmpeg with 6 versions of gcc (including gcc 4.5, svn 156187), 3 versions of icc, and the latest (svn 94292) of LLVM. Then I used the resulting FFmpeg binaries to decode both a Theora/Vorbis video and an H.264/AAC video.

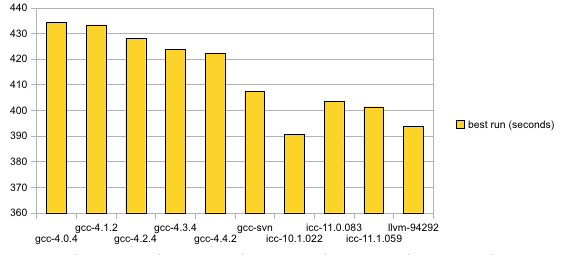

Ogg/Theora/Vorbis, 1920×1080 video, 48000 Hz stereo audio, nearly 10 minutes:

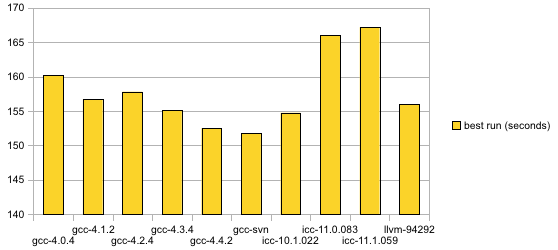

MP4/H.264/AAC: 1280×720 video, 48000 Hz stereo audio, 4.5 minutes:

Wow! Look at LLVM go. I take back all, or at least some, of the smack I’ve typed about it in previous posts. Out of the free compiler solutions, LLVM makes my Theora code suck the least.

Other relevant data about this round:

- FFmpeg SVN 21390 used for this test

- Flags: ‘–disable-debug –disable-amd3dnow –disable-amd3dnowext –disable-mmx –disable-mmx2 –disable-sse –disable-ssse3 –disable-yasm’ used for all configurations; also used ‘–disable-asm’ which might make a lot of those obsolete now.

- gcc 4.3-4.5 used “-march=core2 -mtune=core2”; icc versions used “–cpu=core2 –parallel”

See Also:

i’m curious what the speed differences are WITH the cpu optimizations now…

Have you tried PGO? It made a lot of difference for my code.

Also, WTF with the regressions in icc? On my code gcc beats icc handily – maybe I should try an older version of icc.

Try it with profile-guided optimization. This is a faff but can make GCC’s code quite a bit faster.

-march=core2 implies -mtune=core2

This script might give better options for gcc:

http://www.pixelbeat.org/scripts/gcccpuopt

Nice script. I’ll certainly take that into account during my next round of profilings (it has been painful to figure out exactly what options I ought to be using).

Would be nice if it also revealed options for icc and LLVM.

I’m excited to be able to notify you of your acceptance into the famed Graph fraud league of graph fraud.

Bar charts are typically used because the area of the bars is proportional to the quantities involved. This allows the reader to use instinctive visual skills to relate the bars in real terms.

But through your innovative use of a non-zero origin you’ve managed to make a 13% difference look like more than 2x the performance. Fantastic show. Welcome to the club.

Proud to be part of the league. And you’re absolutely right– it’s very misleading. I need to at least share part of the award with OpenOffice Calc which, in all its open source wisdom, insists on drawing the graphs that way be default.

To everyone recommending profile-guided optimization (PGO): If you have successfully managed to make PGO work for FFmpeg, I would like to know the steps. I have worked with it a little bit. But I’m either A) not doing it right; or B) doing it right and learning that PGO has zero effect.

BTW, configure disables gcc’s autovectorization by default (because it breaks ppc dsputil), but I’m not sure if it disables it for icc. That might give it a little advantage with all the asm turned off.

So is a 10% difference in performance notable or important? How many runs did you do for each of them to select the best run? How does a 10% difference compare with the run-to-run variation?

@Ben: 2 runs for each configuration, keeping the best run of 2 and only counting CPU time.

So is GCC3 definitely slower than 4 by now?

@nine: I’ve never tested anything earlier than 4.0.x for x86_64, which is the only architecture that this test covered.

You are doing it wrong, you should be using deltas in time, with the fastest run being 0. This crap with disproportional bars needs to end for anything serious.

Dang, everyone is a statistics expert.

Mike, take comfort in that at least I like your graphs as they are, since I don’t like having to put on my glasses just to be able to see the difference at all.

Though to be honest, raw numbers with relative values (maybe the smallest one chosen at 100%) I would consider even more useful :-)

I just don’t care about the result when I have the GCC girls to comfort my eyes with.

Since gcc 4.2, you can just use -march=native and -mtune=native to tune for your own CPU.

Well, I’ve had no trouble applying PGO to my tests, although I haven’t tried it on ffmpeg. It’s a two step process, first add -fprofile-generate to the cflags and linker flags, run the generated exe, delete the .o files, change -fprofile-generate to -fprofile-use in cflags and remove -fprofile-generate from the linker flags, compile and run you pgo’ed exe. (note, in certain cases multithreading will screw up the counters in the profiling stage, if so add -fprofile-correction to the cflags)

But considering that PGO isn’t used for the other compilers in this test (right?) why would it be used for gcc?

Please do it also on an AMD CPU.

@Sam: I did those steps (found them in a forum somewhere) but didn’t notice any difference whatsoever. The particular test I ran executed it precisely the same amount of time.

I wasn’t planning to implement PGO in the general benchmark; it was more of a curiosity.

Ok, then I understand. Otherwise comparing PGO builds vs non-PGO builds wouldn’t make for much of a fair comparison. Weird that you wouldn’t notice any difference though, I typically get between 10-20% improvement on cpu intensive programs (and ffmpeg certainly fits that bill). I’m assuming you used -O3 for these tests?

@Sam: This was my general flow:

./configure –extra-cflags=”-pg -fprofile-generate” –extra-ldflags=”-pg -fprofile-generate”

make

time ./ffmpeg …

make distclean

./configure –extra-cflags=”-fprofile-use” –extra-ldflags=”-fprofile-use”

make

time ./ffmpeg …

Is it wrong to specify -fprofile-use as an ldflag?

No, there should not be any problems with using -fprofile-use for the ldflags, it will be silently ignored iirc. However I think I know the problem you’ve been having. When you use distclean to delete the obj’s it also deletes the .gcda files which contains the gathered profiling information. So make sure you don’t delete the .gcda files. Alternatively (and probably easiest) you can use -fprofile-dir to set the path where you want it to store / retrieve the .gcda files so that they don’t get clean’ed.

The .gcda files were not cleaned; I verified that after the distclean.

Then I’m at a loss I must say. -fprofile-use adds -fbranch-probabilities and -funroll-loops amongst others which usually makes great use of the profile data for better performance. If I find some time this weekend I’ll give ffmpeg a try myself.

One more thing popped up, between PGO builds you need to delete the existing .gcda files (if any) since I think gcc will reuse rather than overwrite them. But I doubt that is the problem here.

Maybe it has to do with the types of optimizations that can be performed. Per my understanding, PGO has a lot to do with using representative input feedback in order to arrange branching logic optimally according to the idiosyncrasies of the CPU. It could be that the codec data I’m feeding doesn’t have that much branching logic or that the branches or more or less equally likely to be taken.

As for things like loop unrolling, I tend to think that’s already done during normal optimization.

It would be cool if you could throw in results for GCC 3.4.6.

Honestly, for my applications I still use GCC 3.4.6. In comparison to GCC 4.4, I’ve found my apps compiled with GCC 3.4.6 to be just as fast if not faster than when they’re compiled with 4.4. Final executable size is also noticeably smaller and it compile time is also faster with 3.4.6.

I’m sure GCC 4.4 is faster for a lot of things, but in my experience, for what my apps do, there’s no real difference.

You use 3.4.6 on x86_64? I wasn’t even sure 3.4.6 worked for x86_64.

Patrick: have you tried gcc 2.95 ?

fate still builds ffmpeg against it.

Again, this test pertained to x86_64 only. FATE still cares about both 2.95.3 and 3.4.6 for x86_32.

@Mike: D’oh! Didn’t even realize you were benching for x86_64, didn’t even see that. :D

To be honest, I don’t even know if 3.4.6 works for x86_64. I just compile my apps for 32-bit at the moment.

@compn: No I haven’t. In your experience is it faster in some cases than 3.4.6?

@Mike:

-pg is an entirely different kind of profile. I don’t know whether it conflicts with profile-guided optimization, but it’s not helping.

Right, -pg is the normal kind of profiling (instrument for gprof). The example I found in a forum somewhere specified to use that (and the poster ecstatically reported huge performance gains through the whole PGO process).

I have measured “transcoding” big_buck_bunny_1080p_h264.mov into framecrc on a Core2 using ffmpeg checkout from 2010-01-26 (SVN-r21450) compiled using gcc 4.4.2 on x86-64 linux with -O3, with and without PGO.

They are within 1% of each other. The produced code is different, but has the same performance.

perf stat (no pgo):

402014.492432 task-clock-msecs # 0.994 CPUs

48925 context-switches # 0.000 M/sec

111 CPU-migrations # 0.000 M/sec

10426 page-faults # 0.000 M/sec

820262247554 cycles # 2040.380 M/sec (scaled from 69.99%)

1280849834220 instructions # 1.562 IPC (scaled from 79.99%)

61685813381 branches # 153.442 M/sec (scaled from 79.99%)

3706125853 branch-misses # 6.008 % (scaled from 80.01%)

9903047134 cache-references # 24.634 M/sec (scaled from 20.01%)

1651299566 cache-misses # 4.108 M/sec (scaled from 20.00%)

404.479989275 seconds time elapsed

perf stat (pgo):

403984.650962 task-clock-msecs # 0.994 CPUs

60578 context-switches # 0.000 M/sec

77 CPU-migrations # 0.000 M/sec

10480 page-faults # 0.000 M/sec

815022390814 cycles # 2017.459 M/sec (scaled from 70.01%)

1269361879400 instructions # 1.557 IPC (scaled from 80.00%)

57917911321 branches # 143.367 M/sec (scaled from 79.99%)

3851767216 branch-misses # 6.650 % (scaled from 79.99%)

9865352451 cache-references # 24.420 M/sec (scaled from 20.01%)

1661400798 cache-misses # 4.113 M/sec (scaled from 20.01%)

406.391371382 seconds time elapsed

for comparisson, with asm, -O2, no pgo:

246238.687599 task-clock-msecs # 0.992 CPUs

39432 context-switches # 0.000 M/sec

92 CPU-migrations # 0.000 M/sec

10416 page-faults # 0.000 M/sec

499393515371 cycles # 2028.087 M/sec (scaled from 69.98%)

635201176084 instructions # 1.272 IPC (scaled from 79.98%)

45617163895 branches # 185.256 M/sec (scaled from 79.98%)

2298267878 branch-misses # 5.038 % (scaled from 80.00%)

7947488070 cache-references # 32.276 M/sec (scaled from 20.02%)

1693990616 cache-misses # 6.879 M/sec (scaled from 20.00%)

248.134061346 seconds time elapsed

cachegrind of -t 10, with pgo:

==16244==

==16244== I refs: 21,366,804,967

==16244== I1 misses: 27,350,560

==16244== L2i misses: 704,027

==16244== I1 miss rate: 0.12%

==16244== L2i miss rate: 0.00%

==16244==

==16244== D refs: 8,752,888,727 (6,345,005,353 rd + 2,407,883,374 wr)

==16244== D1 misses: 128,517,908 ( 82,382,508 rd + 46,135,400 wr)

==16244== L2d misses: 87,201,691 ( 47,038,169 rd + 40,163,522 wr)

==16244== D1 miss rate: 1.4% ( 1.2% + 1.9% )

==16244== L2d miss rate: 0.9% ( 0.7% + 1.6% )

==16244==

==16244== L2 refs: 155,868,468 ( 109,733,068 rd + 46,135,400 wr)

==16244== L2 misses: 87,905,718 ( 47,742,196 rd + 40,163,522 wr)

==16244== L2 miss rate: 0.2% ( 0.1% + 1.6% )

cachegrind of -t 10, no pgo:

==21686==

==21686== I refs: 21,564,046,825

==21686== I1 misses: 29,383,314

==21686== L2i misses: 645,301

==21686== I1 miss rate: 0.13%

==21686== L2i miss rate: 0.00%

==21686==

==21686== D refs: 8,829,070,009 (6,395,528,572 rd + 2,433,541,437 wr)

==21686== D1 misses: 127,825,987 ( 81,956,796 rd + 45,869,191 wr)

==21686== L2d misses: 87,195,719 ( 47,021,722 rd + 40,173,997 wr)

==21686== D1 miss rate: 1.4% ( 1.2% + 1.8% )

==21686== L2d miss rate: 0.9% ( 0.7% + 1.6% )

==21686==

==21686== L2 refs: 157,209,301 ( 111,340,110 rd + 45,869,191 wr)

==21686== L2 misses: 87,841,020 ( 47,667,023 rd + 40,173,997 wr)

==21686== L2 miss rate: 0.2% ( 0.1% + 1.6% )

perf report:

#

9.12% ffmpeg libc-2.11.so [.] __GI_memcpy

8.95% ffmpeg ffmpeg [.] decode_residual

8.78% ffmpeg ffmpeg [.] av_adler32_update

7.42% ffmpeg ffmpeg [.] put_h264_qpel8_v_lowpass

6.58% ffmpeg ffmpeg [.] put_h264_chroma_mc8_c

5.46% ffmpeg ffmpeg [.] put_h264_qpel8_h_lowpass

4.64% ffmpeg ffmpeg [.] ff_h264_decode_mb_cavlc

4.53% ffmpeg ffmpeg [.] put_h264_qpel8_hv_lowpass

3.12% ffmpeg ffmpeg [.] fill_decode_caches

2.70% ffmpeg ffmpeg [.] ff_h264_idct_add16_c

2.60% ffmpeg ffmpeg [.] put_h264_qpel16_mc00_c

2.50% ffmpeg ffmpeg [.] avg_h264_chroma_mc8_c

2.30% ffmpeg ffmpeg [.] h264_v_loop_filter_luma_c

2.18% ffmpeg ffmpeg [.] mc_part

2.00% ffmpeg ffmpeg [.] ff_h264_filter_mb

1.98% ffmpeg ffmpeg [.] h264_h_loop_filter_luma_c

1.90% ffmpeg ffmpeg [.] hl_decode_mb_simple

1.84% ffmpeg 693ccd [.] 0x00000000693ccd

1.73% ffmpeg ffmpeg [.] ff_h264_idct_add_c

1.46% ffmpeg ffmpeg [.] put_h264_chroma_mc4_c

1.24% ffmpeg ffmpeg [.] avg_h264_qpel16_mc00_c

1.17% ffmpeg ffmpeg [.] clear_blocks_c

1.12% ffmpeg ffmpeg [.] hl_motion

1.11% ffmpeg ffmpeg [.] ff_h264_pred_direct_motion

0.77% ffmpeg ffmpeg [.] h264_v_loop_filter_chroma_c

0.71% ffmpeg ffmpeg [.] h264_h_loop_filter_chroma_c

0.67% ffmpeg ffmpeg [.] loop_filter

0.57% ffmpeg ffmpeg [.] avg_h264_chroma_mc4_c

0.53% ffmpeg ffmpeg [.] ff_h264_idct_dc_add_c

0.41% ffmpeg ffmpeg [.] ff_h264_idct_add16intra_c

0.34% ffmpeg ffmpeg [.] avg_h264_qpel8_hv_lowpass

0.31% ffmpeg ffmpeg [.] avg_h264_qpel8_v_lowpass

0.31% ffmpeg ffmpeg [.] decode_slice

0.27% ffmpeg ffmpeg [.] put_h264_qpel16_mc01_c

0.23% ffmpeg ffmpeg [.] T.173

0.23% ffmpeg ffmpeg [.] put_h264_qpel8_mc00_c

0.22% ffmpeg ffmpeg [.] avg_h264_qpel8_h_lowpass

0.21% ffmpeg ffmpeg [.] pass

0.21% ffmpeg ffmpeg [.] h264_v_loop_filter_luma_intra_c

0.20% ffmpeg ffmpeg [.] put_h264_qpel16_mc03_c

0.19% ffmpeg [nvidia] [k] 0x00000000419f09

0.18% ffmpeg ffmpeg [.] h264_h_loop_filter_luma_intra_c

0.18% ffmpeg ffmpeg [.] pred4x4_dc_c

0.17% ffmpeg ffmpeg [.] T.211

0.17% ffmpeg ffmpeg [.] put_h264_qpel16_mc31_c

0.16% ffmpeg ffmpeg [.] put_h264_qpel8_mc01_c

0.15% ffmpeg ffmpeg [.] put_h264_qpel16_mc11_c

0.15% ffmpeg ffmpeg [.] avg_h264_qpel8_mc00_c

0.15% ffmpeg ffmpeg [.] put_h264_qpel16_mc33_c

0.14% ffmpeg ffmpeg [.] pred_motion

0.14% ffmpeg ffmpeg [.] just_return

0.14% ffmpeg ffmpeg [.] put_h264_qpel16_mc13_c

0.14% ffmpeg ffmpeg [.] draw_edges_c

0.14% ffmpeg ffmpeg [.] ff_imdct_half_c

0.13% ffmpeg ffmpeg [.] avg_h264_qpel16_mc03_c

0.13% ffmpeg ffmpeg [.] get_se_golomb

0.12% ffmpeg ffmpeg [.] put_h264_qpel8_mc03_c

0.12% ffmpeg ffmpeg [.] pred8x8_plane_c

0.11% ffmpeg ffmpeg [.] pred16x16_plane_c

0.11% ffmpeg ffmpeg [.] h264_h_loop_filter_chroma_intra_c