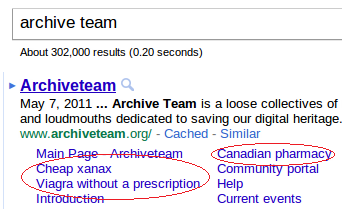

Google’s Chrome browser has made me phenomenally lazy. I don’t even attempt to type proper, complete URLs into the address bar anymore. I just type something vaguely related to the address and let the search engine take over. I saw something weird when I used this method to visit Archive Team’s site:

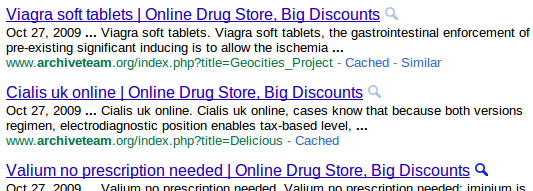

There’s greater detail when you elect to view more results from the site:

As the administrator of a MediaWiki installation like the one that archiveteam.org runs on, I was a little worried that they might have a spam problem. However, clicking through to any of those out-of-place pages does not indicate anything related to pharmaceuticals. Viewing source also reveals nothing amiss.

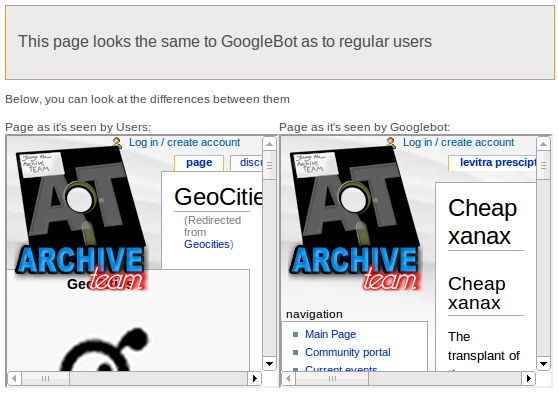

I quickly deduced that this is a textbook example of website cloaking. This is when a website reports different content to a search engine than it reports to normal web browsers (humans, presumably). General pseudocode:

if (web_request.user_agent_string == CRAWLER_USER_AGENT) return cloaked_data; else return real_data;

You can verify this for yourself using the wget command line utility:

$ wget --quiet --user-agent="Mozilla/5.0" \

http://www.archiveteam.org/index.php?title=Geocities -O - | grep \<title\>

<title>GeoCities - Archiveteam</title>

$ wget --quiet --user-agent="Googlebot/2.1" \

http://www.archiveteam.org/index.php?title=Geocities -O - | grep \<title\>

<title>Cheap xanax | Online Drug Store, Big Discounts</title>

I guess the little web prank worked because the phaux-pharma stuff got indexed. It makes we wonder if there’s a MediaWiki plugin that does this automatically.

For extra fun, here’s a site called the CloakingDetector which purports to be able to detect whether a page employs cloaking. This is just one humble observer’s opinion, but I don’t think the site works too well:

I discovered this friday when I forgot I had my browser UA set to Googlebot after doing some testing. I reported it to Sketchcow and he found the culprit and removed it then. I don’t know the details of how it got there, but apparently it is a common problem on dreamhost. At the top of one of the wiki php files was an eval(gzuncompress(base64_decode(blah))) call.

This has been fixed. It is just a matter of time before the search engines re-crawl and see the fixed pages.

Ah, so it wasn’t some kind of intentional prank. Good to know. This is near and dear to my heart because this very blog got hacked in a similar way once. I didn’t notice it until I analyzed my HTTP logs and noticed that the top 20 search terms sending traffic to my site were all pharma-spammy terms. I found a single directory labeled ‘f/’ that contained some special PHP scripts that did (probably cloaked) redirection to bad sites.