The new FATE server is shaping up well. I think most of the old configurations have been migrated to the new server. I see one new compiler for x86_64– PathScale. It’s not faring particularly well at this point.

New Tests

As I write this, I noticed that there are now an even 700 tests, twice as many as the last time I trumpeted such a milestone. (It should be noted that the new FATE system finally breaks down the master regression suite into individual tests.) Thankfully, it’s no longer necessary to wait for me to create or edit tests (anyone with FFmpeg privileges can do this), nor is it necessary to keep up with this blog to know exactly what tests are new. Now, you can simply inspect the file history on tests/fate.mak and tests/fate2.mak (I think these 2 files are going to merge in the near future).

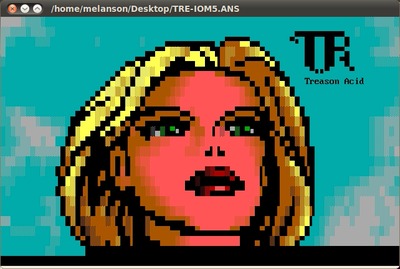

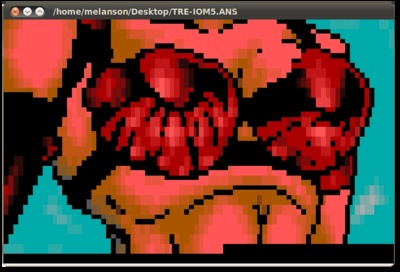

Vitor, as of r24865: “Add FATE test for ANSI/ASCII animation and TTY demuxer.” Eh? What’s this about? I admit I was completely removed from FFmpeg development for much of June and July so I could have missed a lot. Fortunately, I can check the file history to see which lines were added to make this test happen. And if FATE is exercising the test, you know exactly where the samples will live. Here’s this new decoder in action on the relevant sample:

The file history fingers Suxen drol/Peter Ross for this handiwork. I might have guessed– the only person who is arguably more enamored with old, weird formats than even I. Now we wait for the day that YouTube has support for this format. I’m sure there are huge archives of these animations out there (and I wager that Trixter and Jason Scott know where).

It’s an animation — it just keeps going

Meanwhile, the FATE suite now encompasses a bunch of perceptual audio formats, thanks to the 1-off testing method and a few other techniques. These formats include Bink audio, WMA Pro, WMA voice, Vorbis, ATRAC1, ATRAC3, MS-GSM, AC3, E-AC3, NellyMoser, TrueSpeech, Intel Music Coder, QDM2, RealAudio Cooker, QCELP (just going down the source control log here), and others, no doubt.

Then there’s this curious tidbit: “Add FATE test for WMV8 DRM”. The test spec is "fate-wmv8-drm: CMD = framecrc -cryptokey 137381538c84c068111902a59c5cf6c340247c39 -i $(SAMPLES)/wmv8/wmv_drm.wmv -an". I would still like to investigate FFmpeg’s cryptographic capabilities, which I suspect are moving in a direction to function as a complete SSL stack one day.

New Platforms

As for new platforms, the new FATE system finally allows testing on OS/2 (remember that classic? It was “the totally cool way to run your computer”). Thanks to Dave Yeo for taking this on.

Further, a new MIPS-based platform recently appeared on the FATE list. This one reports itself as running on 74kf CPU. Googling for this processor quickly brings up Mans’ post about the Popcorn Hour device. So, congratulations to him for getting the mundane box to serve a higher purpose. Perhaps one day, I’ll be able to do the same for that Belco Alpha-400 netbook.