You know what? I’m tired of being the benchmark bitch.

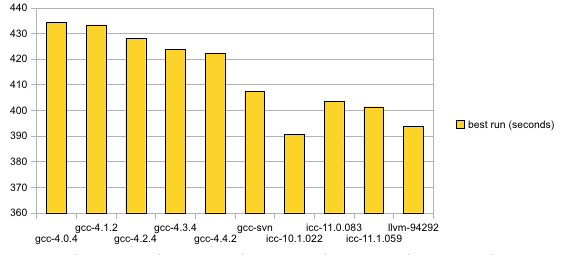

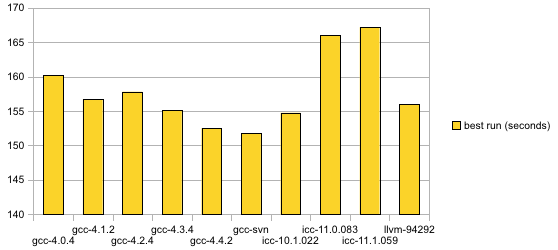

I have come to realize that benchmarks are exclusively the domain of people who have a vested interest in the results. I’m not financially or emotionally invested in the results of these compiler output performance comparisons; it was purely a matter of academic curiosity. As such, it’s hard to keep the proper motivation. No matter how carefully I set up a test and regardless of how many variables I control, the feedback is always the same: “Try tweaking this parameter! I’m sure that will make my favorite compiler clean up over all the other options!” “Your statistical methods are all wrong!” “Your graphs are fraudulently misleading.” Okay, I wholeheartedly agree with that last one, but blame OpenOffice for creating completely inept graphs by default.

Look, people, compilers can’t work magic. Deal with it. “–tweak-optim-parm=62” isn’t going to net the 10% performance increase needed to make the ethically pure, free software compiler leapfrop over the evil, proprietary compiler (and don’t talk to me about profile-guided optimization; I’m pretty sure that’s an elaborate hoax and even if it’s not, the proprietary compilers also advertise the same feature). Don’t put your faith in the compiler to make your code suck less (don’t I know). Investigate some actual computer science instead. It’s especially foolhardy to count on compiler optimizations in open source software. Not necessarily because of gcc’s quality (as you know, I think gcc does remarkably well considering its charter), but because there are so many versions of compilers that are expected to compile Linux and open source software in general. The more pressing problem (addressed by FATE) is making sure that a key piece of free software continues to compile and function correctly on a spectacular diversity of build and computing environments.

If anyone else has a particular interest in controlled FFmpeg benchmarks, you may wish to start with my automated Python script in this blog post. It’s the only thing that kept me sane when running these benchmarks up to this point.

I should clarify that I am still interested in reorganizing FATE so that it will help us to systematically identify performance regressions in critical parts of the code. The performance comparison I care most about is whether today’s FFmpeg SVN copy is slower than yesterday’s SVN copy.

See Also:

Update: I have to state that I’m not especially hurt by any criticism of my methods (though the post may have made it seem that way). Mostly, I wanted to force myself to quit wasting time on these progressively more elaborate and time-consuming benchmarks when they’re really not terribly useful in the grand scheme of things. I found myself brainstorming some rather involved profiling projects and I had to smack myself. I have far more practical things I really should be using my free time for.