As briefly mentioned in my last Theora post, I think FFmpeg’s Theora decoder can exploit multiple CPUs in a few ways: 1) Perform all of the DC prediction reversals in a separate thread while the main thread is busy decoding the AC coefficients (meanwhile, I have committed an optimization where the reversal occurs immediately after DC decoding in order to exploit CPU cache); 2) create n separate threads and assign each (num_slices / n) slices to decode (where a slice is a row of the image that is 16 pixels high).

So there’s the plan. Now, how to take advantage of FFmpeg’s threading API (which supports POSIX threads, Win32 threads, BeOS threads, and even OS/2 threads)? Would it surprise you to learn that this aspect is not extensively documented? Time to reverse engineer the API.

I also did some Googling regarding multithreaded FFmpeg. I mostly found forum posts complaining that FFmpeg isn’t effectively leveraging however many n cores someone’s turbo-charged machine happens to present to the OS, as demonstrated by their CPU monitoring tool. Since I suspect this post will rise in Google’s top search hits on the topic, allow me to apologize to searchers in advance by explaining that multimedia processing, while certainly CPU-intensive, does not necessarily lend itself to multithreading/multiprocessing. There are a few bits here and there in the encode or decode processes that can be parallelized but the entire operation overall tends to be rather serial.

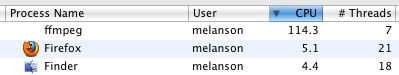

So this is the goal:

…to see FFmpeg break through the 99.9% barrier in the CPU monitor. As an aside, it briefly struck me as ironic that people want FFmpeg to use as much of as many available CPUs as possible but scorn the project from my day job for being quite capable of doing the same.

Moving right along, let’s see what can be done about exploiting what limited multithreading opportunities that Theora affords.

First off: it’s necessary to explicitly enable threading at configure-time (e.g., “–enable-pthreads” for POSIX threads on Unix flavors). Not sure why this is, but there it is.