Even though I have been studying and working on multimedia technology since 2000 — reverse engineering, documenting, and reimplementing a variety of audio and video codecs — I didn’t actually begin to understand why various algorithms achieved their compression until about 2003. I’m just like that — I study the practice first, and then the underlying theory eventually becomes clear to me (maybe; it has been 9 years and I still couldn’t explain everything about the discrete cosine transform if you asked).

I happened to be looking back over the ZMBV (DOSBox) video codec today. The last time I looked at ZMBV, I thought of a slightly better method for computing error between 2 blocks. My method involved summing the number of pixels that differed between 2 blocks. Michael promptly did me one better by implementing a “0th-order entropy approximator”. When I took a closer look at the method today, I thought it was functionally identical to my algorithm. It is XOR’ing 2 vectors of pixels, maintaining a histogram of the results (i.e., the number of pixel pairs whose bitwise XOR was 1, 2, etc.), then summing the numbers in the histogram array.

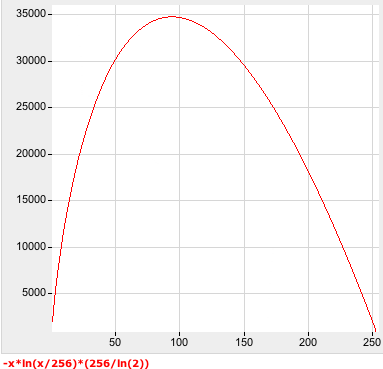

Except not quite. A closer examination of the summation revealed “sum+= score_tab[histogram[i]]”, and it occurs on elements 1..255. What is score_tab[]? Why, it’s:

/* for i from 1..255 */

score_tab[i]= -i * log(i/(double)(ZMBV_BLOCK*ZMBV_BLOCK)) * (256/M_LN2);

(ZMBV_BLOCK = 16, M_LN2 = ln(2)).

I want pictures. Pictures help me a lot. I don’t do a lot of heavy duty math lifting and am not up to date on modern tools. But I figured that in this day and age, there must be plenty of graphing tools on the web. This is the top Google hit for “graph a function” and it offers the following graph:

So that’s interesting. Now it’s time to reconcile this with Kostya’s helpful description of the method:

First of all, Michael’s code explained: he used table to estimate entropy (well, base term from the Shannon’s theory of information) of the XOR’ed block. In other words, the higher count of the same symbols, the lower entropy and the better compression of the block. 0-th order means he didn’t use context to estimate entropy.

I think I might be starting to understand. My method was predicated on trying to generate an error vector that had as many 0s as possible grouped into strings of 8 bits. I.e., I was trying to maximize 0 bytes without regard to what the non-zero bytes contained. Michael’s method is also concerned with maximizing 0 bytes but also cares about what is happening in those non-zero bytes. That graph indicates that the method prefers values at the top and bottom of the unsigned 8-bit range. Why would that be? I suspect that it may have something to do with the fact that the higher and lower values have longer strings of repeating symbols (e.g., 1 = 00000001; 255 = 11111111).

Or I might be completely wrong.

Sorry if this is kindergarten-level stuff to some of you readers. But you know I like to use this space as a personal technical journal for working out these lessons.

I just have been interested in general data compression stuff since last century (about 1998-1999). You ought to learn about arithmetic compression and modelling to better understand that stuff (it does not matter if you can’t fully understand it though).

Good, because that’s where I am. :-) I have studied arithmetic compression but don’t fully understand it.

Well, an idea of representing all information with an extremely long fraction (which takes all the file) is a bit counterintuitive. But common principles (like: the better you can predict symbol the better you can code it) should be easy to understand.

The most important issue is not arithmetic coder itself, it’s a model that feeds data into it. So when you have fixed frequencies for each symbol it’s called zero-order model, when you just count frequencies for each symbol and feed them to coder is called order one model; if you count frequencies for symbols depending on previous ones it’s called N-order model (i.e. you can say that after “th” string you are likely to expect ‘e’ or ‘a’ or ‘o’ but not ‘g’ or ‘p’). Everything goes from this.

I think you misunderstood: score_tab is used on the _count_ of the specific XOR values, not on the XOR values themselves.

For compressibility of a several hundred kB large frame, it simply doesn’t matter if you have all 0, or all 1 or all 2 or whatever (i.e. a fully black, fully gray or fully white frame are all about the same complexity).

That “0th-order entropy approximator” basically estimates the size of an optimal Huffman encoding (with a fixed input data symbol size of 8 bits int this case) of the data.

Maybe that helps to understand it, sometimes you have to read the first 20 ways someone tries to explain it till they finally come up with the 21st that makes it obvious to you :-)

Or maybe I should go back to the one area in which I have demonstrated marginal proficiency: continuously testing FFmpeg. :-)