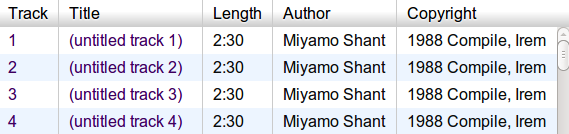

My Game Music Appreciation website has a big problem that many visitors quickly notice and comment upon. The problem looks like this:

The problem is that all of these songs are 2m30s in length. During the initial import process, unless a chiptune file already had curated length metadata attached, my metadata utility emitted a default play length of 150 seconds. This is not good if you want to listen to all the songs in a soundtrack without interacting with the player page, but have various short songs (think “game over” or other quick jingles) that are over in a few seconds. Such songs still pad out 150 seconds of silence.

So I needed to correct this. Possible solutions:

- Manually: At first, I figured I could ask the database which songs needed fixing and listen to them to determine the proper lengths. Then I realized that there were well over 1400 games affected by this problem. This just screams “automated solution”.

- Automatically: Ask the database which songs need fixing and then somehow ask the computer to listen to the songs and decide their proper lengths. This sounds like a winner, provided that I can figure out how to programmatically determine if a song has “finished”.

SQL Shame

This play adjustment task has been on my plate for a long time. A key factor that has blocked me is that I couldn’t figure out a single SQL query to feed to the SQLite database underlying the site which would give me all the songs I needed. To be clear, it was very simple and obvious to me how to write a program that would query the database in phases to get all the information. However, I felt that it would be impure to proceed with the task unless I could figure out one giant query to get all the information.

This always seems to come up whenever I start interacting with a database in any serious way. I call it SQL shame. This task got some traction when I got over this nagging doubt and told myself that there’s nothing wrong with the multi-step query program if it solves the problem at hand.

Suddenly, I had a flash of inspiration about why the so-called NoSQL movement exists. Maybe there are a lot more people who don’t like trying to derive such long queries and are happy to allow other languages to pick up the slack.

Estimating Lengths

Anyway, my solution involved writing a Python script to iterate through all the games whose metadata was output by a certain engine (the one that makes the default play length 150 seconds). For each of those games, the script queries the song table and determines if each song is exactly 150 seconds. If it is, then go to work trying to estimate the true length.

The forgoing paragraph describes what I figured was possible with only a single (possibly large) SQL query.

For each song represented in the chiptune file, I ran it through a custom length estimator program. My brilliant (err, naïve) solution to the length estimation problem was to synthesize seconds of audio up to a maximum of 120 seconds (tightening up the default length just a bit) and counting how many of those seconds had all 0 samples. If the count reached 5 consecutive seconds of silence, then the estimator rewound the running length by 5 seconds and declared that to be the proper length. Update the database.

There were about 1430 chiptune files whose songs needed updates. Some files had 1 single song. Some files had over 100. When I let the script run, it took nearly 65 minutes to process all the files. That was a single-threaded solution, of course. Even though I already had the data I needed, I wanted to try to hand at parallelizing the script. So I went to work with Python’s multiprocessing module and quickly refactored it to use all 4 CPU threads on the machine where the files live. Results:

- Single-threaded solution: 64m42s to process corpus (22 games/minute)

- Multi-threaded solution: 18m48s with 4 CPU threads (75 games/minute)

More than a 3x speedup across 4 CPU threads, which is decent for a primarily CPU-bound operation.

Epilogue

I suspect that this task will require some refinement or manual intervention. Maybe there are songs which actually have more than 5 legitimate seconds of silence. Also, I entertained the possibility that some songs would generate very low amplitude noise rather than being perfectly silent. In that case, I could refine the script to stipulate that amplitudes below a certain threshold count as 0. Fortunately, I marked which games were modified by this method, so I can run a new script as necessary.

SQL Schema

Here is the schema of my SQlite3 database, for those who want to try their hand at a proper query. I am confident that it’s possible; I just didn’t have the patience to work it out. The task is to retrieve all the rows from the games table where all of the corresponding songs in the songs table is 150000 milliseconds.

Continue reading