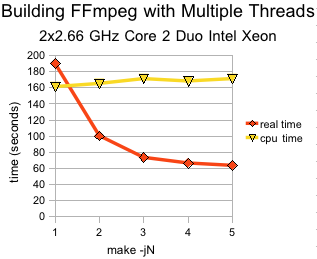

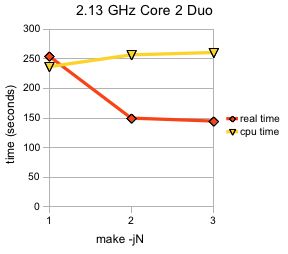

I got bored today and decided to empirically determine how much FFmpeg compilation time can be improved by using multiple build threads, i.e., ‘make -jN’ where N > 1. I also wanted to see if the old rule of “number of CPUs plus 1” makes any worthwhile difference. The thinking behind that latter rule is that there should always be one more build job queued up ready to be placed on the CPU if one of the current build jobs has to access the disk. I think I first learned this from reading the Gentoo manuals. I didn’t find that it made a significant improvement. But then, Gentoo is for ricers.

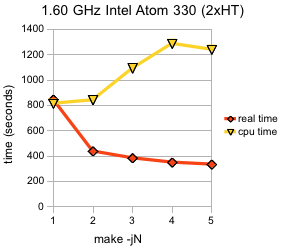

I think the most interesting thing to observe about these graphs is the CPU time (the amount of time the build jobs are actually spending on the combined CPUs). The number is roughly steady for the Core 2 CPUs regardless of number of jobs while the Hyperthreaded Atom CPU sees a marked increase in total CPU time. Is that an artifact of Hyperthreading? Or maybe I just didn’t put together a stable testing methodology.

CPU time numbers are somewhat worthless with HyperThreading, though the graph looks still suspicious even for that :-)

Anyway, how much of a difference high -j numbers (higher than number of CPUs) make depends a bit on things like disk speed, latency etc, too.

E.g. when the code is already in cache and like with MPlayer dependencies are generated separately it tends to still give quite a speedup.

However it seems to me that make is a bit inefficient, even with -j6 I can’t get my 4-core CPU to be constantly fully loaded, usually one CPU is only at 50% all the time.

On my Core 2 Duos I always use make -j2 for FFmpeg. One trick I pull is that I always build without ffserver because it removes one link for incremental builds. For chromium I usually build -j4 for compiling but when I get to linking abort and switch to -j1 because apparently 2 GB is no longer enough RAM to link a large C++ program in parallel.

I don’t think that “number of CPUs plus one” works pretty well nowadays. On my 8-way system, I still have CPU to spare when using make -j16 … the main issue there is that it’ll yes load the memory and the process pool to run the sixteen build at once (at least until the final linking stage is hit) but the I/O will block it unless I’m building in RAM.

I did some timings on my quad-core, hyper-threaded i7. Results at http://pastebin.ca/1679229 since I can’t do proper tables here. Sorry, no pretty graph.

These builds were done in tmpfs after a warmup run to make sure the compiler was cached.

Note the rise in CPU time when the number of processes exceeds the number of physical cores.

@Mans: I’ve done a graph in Google Doc base on the data you have provided:http://spreadsheets.google.com/oimg?key=0AiEtxVf52pledDB6VmlSZ0Zwc2c2dk9YemZvb0E5Rmc&oid=2&v=1258728117188

I guess if you extend the data to 9 or more, the user+sys should be similar.

@Mans: Yes, that’s about what would be expected with HyperThreading. It basically causes CPU time to be real-time*number of threads up to the number of “virtual” CPUs.

With the i7 that basically means a sudden jump once HyperThreading kicks in since it’s not really of much use.

On the Atom though, since it is in-order, HyperThread usually gives a larger advantage. I think I’ll try to get some numbers with my single-core atom to see if that behaves differently…

Updated up to -j12: http://pastebin.ca/1679533

As expected, both real and CPU times level out after 8.

Maybe OT

http://www.archivum.info/linux-kernel@vger.kernel.org/2008-02/23443/Hyperthreading_performance_oddities

It’s not as extreme as I thought, but HyperThreading on single-CPU Atom still seems to make a bit more difference than on the others, however I am not sure how limiting the speed of the SDHC card I’m compiling on is:

Atom N270, with HT:

-j1:

real 16m4.199s

user 15m25.314s

sys 0m28.966s

-j2:

real 12m42.379s

user 22m30.076s

sys 0m37.446s

-j3:

real 13m10.586s

user 22m37.465s

sys 0m37.730s

I’m did some testing on a Phenom 9650 with gcc the ffmpeg checkout sitting in tmpfs. I kept increasing the -j parameter until I stopped seeing improvement (reduction) in Real, then went a couple steps farther for good measure.

make -j1

real 5m5.695s

user 4m47.040s

sys 0m17.490s

(make clean)

make -j2

real 2m32.320s

user 4m47.010s

sys 0m16.970s

(make clean)

make -j3

real 1m45.182s

user 4m49.930s

sys 0m16.100s

(make clean)

make -j4 died with an error (time for memtest, I guess):

/usr/bin/ld: final link failed: Bad value

collect2: ld returned 1 exit status

make: *** [ffserver_g] Error 1

make: *** Waiting for unfinished jobs….

real 1m24.534s

user 4m48.640s

sys 0m15.590s

(make clean)

make -j4

real 1m25.470s

user 4m49.190s

sys 0m16.540s

FWIW, after the successful make -j4

/home/shortcircuit/tmpfs

4.0G 242M 3.7G 7% /home/shortcircuit/tmpfs

And free -m

total used free shared buffers cached

Mem: 8003 7022 980 0 431 5211

-/+ buffers/cache: 1379 6623

Swap: 15257 20 15236

(make clean)

make -j5

real 1m23.458s

user 4m48.650s

sys 0m15.080s

(make clean)

make -j6

real 1m20.055s

user 4m45.800s

sys 0m14.730s

(make clean)

make -j7

real 1m19.038s

user 4m45.570s

sys 0m14.580s

(make clean)

make -j8

real 1m17.953s

user 4m45.370s

sys 0m14.000s

(make clean)

make -j9

real 1m23.532s

user 4m44.680s

sys 0m13.860s

(make clean)

make -j10

real 1m21.578s

user 4m45.590s

sys 0m13.090s

(make clean)

make -j11

real 1m24.452s

user 4m44.230s

sys 0m14.100s

It looks like for my setup, when compiling within tmpfs, N*2 gets the fastest results.