I processed some more unknown samples today, the ones that came from last month’s big Picsearch score. I found some interesting QuickTime specimens. One of them was filed under video codec FourCC ‘fire’. The sample only contained one frame of type fire and that frame was very small (238 bytes) and looked to contain a number of small sub-atoms. Since the sample had a .mov extension, I decided to check it out in Apple’s QuickTime Player. It played fine, and you can see the result on the new fire page I made in the MultimediaWiki. Apparently, it’s built into QuickTime. The file also features a single frame of RPZA video data. My guess is that the logo on display is encoded with RPZA while the fire block defines parameters for a fire animation.

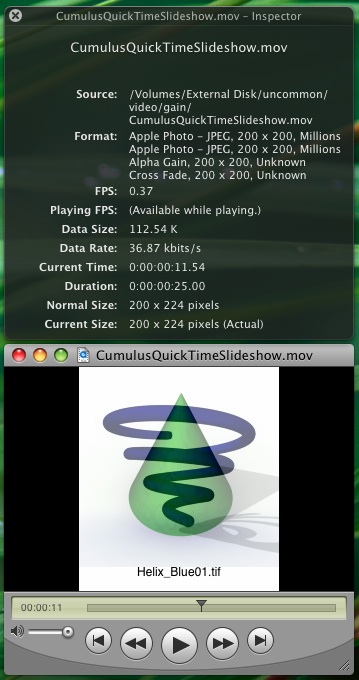

Moving right along, I got to another set of QuickTime samples that were filed under ‘gain’ video codec. This appears to be another meta-codec and this is what it looks like in action:

I decided to post this pretty screenshot here since I didn’t feel like creating another Wiki page for what I perceive to be not a “real” video codec. The foregoing CumulusQuickTimeSlideshow.mov sample comes from here and actually contains 5 separate trak atoms: 2 define ‘jpeg’ data, 1 is ‘gain’, 1 is ‘dslv’ and the last is ‘text’, which defines ASCII strings containing the filenames on the bottom of the slideshow. I have no idea what the dslv atom is for, but something, somewhere in the file defines whether this so-called alpha gain effect will use a cross fade (as seen with the Cumulus shapes) or if it will use an Iris transitional effect (as seen in the sample na_visit03.mov here).

So much about the QuickTime format remains a mystery.