Greed, for lack of a better word, is good; Greed works. Well, most of the time. Maybe.

Picking Prediction Modes

VP8 uses one of 4 prediction modes to predict a 16×16 luma block or 8×8 chroma block before processing it (for luma, a block can also be broken into 16 4×4 blocks for individual prediction using even more modes).

So, how to pick the best predictor mode? I had no idea when I started writing my VP8 encoder. I did not read any literature on the matter; I just sat down and thought of a brute-force approach. According to the comments in my code:

// naive, greedy algorithm: // residual = source - predictor // mean = mean(residual) // residual -= mean // find the max diff between the mean and the residual // the thinking is that, post-prediction, the best block will // be comprised of similar samples

After removing the predictor from the macroblock, individual 4×4 subblocks are put through a forward DCT and quantized. Optimal compression in this scenario results when all samples are the same since only the DC coefficient will be non-zero. Failing that, when the input samples are at least similar to each other, few of the AC coefficients will be non-zero, which helps compression. When the samples are all over the scale, there aren’t a whole lot of non-zero coefficients unless you crank up the quantizer, which results in poor quality in the reconstructed subblocks.

Thus, my goal was to pick a prediction mode that, when applied to the input block, resulted in a residual in which each element would feature the least deviation from the mean of the residual (relative to other prediction choices).

Greedy Approach

I realized that this algorithm falls into the broad general category of “greedy” algorithms— one that makes locally optimal decisions at each stage. There are most likely smarter algorithms. But this one was good enough for making an encoder that just barely works.

Compression Results

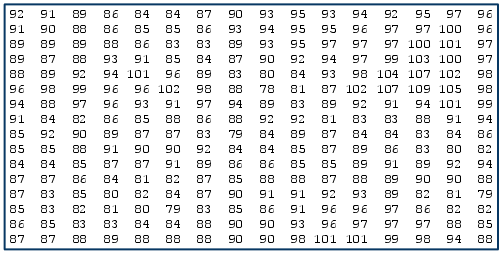

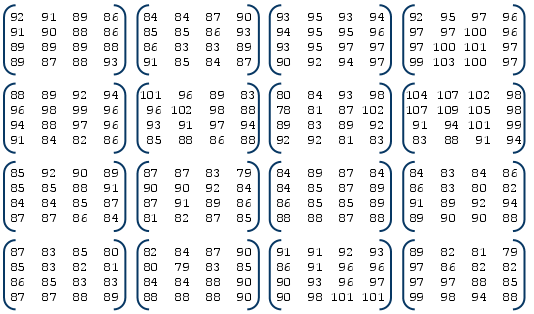

I checked the total file compression size on my usual 640×360 Big Buck Bunny logo image while forcing prediction modes vs. using my greedy prediction picking algorithm. In this very simple test, DC-only actually resulted in slightly better compression than the greedy algorithm (which says nothing about overall quality).

| prediction mode | quantizer index = 0 (minimum) | quantizer index = 10 |

|---|---|---|

| greedy | 286260 | 98028 |

| DC | 280593 | 95378 |

| vertical | 297206 | 105316 |

| horizontal | 295357 | 104185 |

| TrueMotion | 311660 | 113480 |

As another data point, in both quantizer cases, my greedy algorithm selected a healthy mix of prediction modes:

- quantizer index 0: DC = 521, VERT = 151, HORIZ = 183, TM = 65

- quantizer index 10: DC = 486, VERT = 167, HORIZ = 190, TM = 77

Size vs. Quality

Again, note that this ad-hoc test only measures one property (a highly objective one)– compression size. It did not account for quality which is a far more controversial topic that I have yet to wade into.