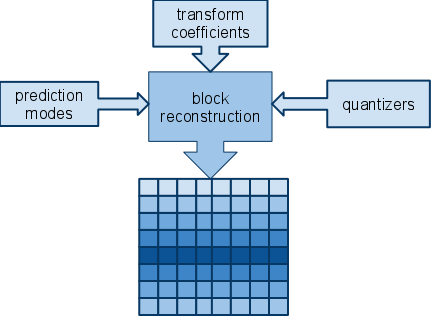

Regarding my toy VP8 encoder, Pengvado mentioned in the comments of my last post, “x264 looks perfect using only i16x16 DC mode. You must be doing something wrong in computing residual or fdct or quantization.” This makes a lot of sense. The encoder generates a series of elements which describe how to reconstruct the original image. Intra block reconstruction takes into consideration the following elements:

I have already verified that both my encoder and FFmpeg’s VP8 decoder agree precisely on how to reconstruct blocks based on the predictors, coefficients, and quantizers. Thus, if the decoded image still looks crazy, the elements the encoder is generating to describe the image must be wrong.

So I started studying the forward DCT, which I had cribbed wholesale from the original libvpx 0.9.0 source code. It should be noted that the formal VP8 spec only defines the inverse transform process, not the forward process. I was using a version designated as the “short” version, vs. the “fast” version. Then I looked at the 0.9.5 FDCT. Then I got the idea of comparing the results of each.

input: 92 91 89 86 91 90 88 86 89 89 89 88 89 87 88 93

- libvpx 0.9.0 “short”:

forward: -314 5 1 5 4 5 -2 0 0 1 -1 -1 1 11 -3 -4 inverse: 92 91 89 86 89 86 91 90 91 90 88 86 88 86 89 89

- libvpx 0.9.0 “fast”:

forward: -314 4 0 5 4 4 -2 0 0 1 0 -1 1 11 -2 -5 inverse: 91 91 89 86 88 86 91 90 91 90 88 86 88 86 89 89

- libvpx 0.9.5 “short”:

forward: -312 7 1 0 1 12 -5 2 2 -3 3 -1 1 0 -2 1 inverse: 92 91 89 86 91 90 88 86 89 89 89 88 89 87 88 93

I was surprised when I noticed that input[] != idct(fdct(input[])) in some of the above cases. Then I remembered that the aforementioned property isn’t what is meant by a “bit-exact” transform– only that all implementations of the inverse transform are supposed to produce bit-exact output for a given vector of input coefficients.

Anyway, I tried applying each of these forward transforms. I got slightly differing results, with the latest one I tried (the fdct from libvpx 0.9.5) producing the best results (to my eye). At least the trees look better in the Big Buck Bunny logo image:

The dense trees of the Big Buck Bunny logo using one of the libvpx 0.9.0 forward transforms

The same segment of the image using the libvpx 0.9.5 forward transform

Then again, it could be that the different numbers generated by the newer forward transform triggered different prediction modes to be chosen. Overall, adapting the newer FDCT did not dramatically improve the encoding quality.

Working on the intra 4×4 mode encoding is generating some rather more accurate blocks than my intra 16×16 encoder. Pengvado indicated that x264 generates perfectly legible results when forcing the encoder to only use intra 16×16 mode. To be honest, I’m having trouble understanding how that can possibly occur thanks to the Walsh-Hadamard transform (WHT). I think that’s where a lot of the error is creeping in with my intra 16×16 encoder. Then again, FFmpeg implements an inverse WHT function that bears ‘vp8’ in its name. This implies that it’s custom to the algorithm and not exactly shared with H.264.

DCT and WHT each contain a complete set of basis vectors. Therefore, if you can control the input coefficients with sufficient precision (i.e. have low enough quantizer), then you can make the output coefficients whatever you want. Including undoing arbitrarily bad prediction (at some cost in bits). That property is obviously transitive, so WHT∘DCT works too. A given standard might or might not allow quantizers that low (h264 does, h263 doesn’t), but even if not, the necessary error won’t be more than a couple LSBs in pixel domain.

The ‘k’ letter looks better to me.

It’s like if some luma or chroma subblocks are misplaced, you can see the problem in every contrasty zone.

Don’t know if the decoder allows you to decode luma only, or chroma only, so you could check.

Just completely remove contrast or saturation and you should have luma-only or chroma-only respectively.

Not sure it works so well with FFmpeg’s definition of contrast (I think you’ll have to increase brightness there if you decrease contrast) but e.g. mplayer -vo gl should handle it nicely, and there might be a patch from me on ffmpeg-devel to change swscale behaviour.

@Pengvado: In VP8, the (quasi-documented) minimum quantizer for the DC-only (Y2) block is 8. This seems to result in a significant amount of error during the quantization round-trip. I don’t know if this is also true in H.264.

@Thibaut & Reimar: I know it looks like random pairs of 4×4 subblocks are swapped. But I’m pretty sure that’s not the case. From my analysis, the WHT quantization introduces a lot of error that propagates through to the reconstruction.

how to improve transform in vp8 ?

how its work For another transforms like WHT ??