As stated in a previous blog post on the matter, FFmpeg’s policy is to reimplement codecs rather than adopt other codebases wholesale. And so it is with Google’s recently open sourced VP8 codec, the video portion of their Webm initiative. I happen to know that the new FFmpeg implementation is in the capable hands of several of my co-developers so I’m not even worrying about that angle.

Instead, I thought of another of my characteristically useless exercises: Create an independent VP8 decoder implementation entirely in pure Python. Silly? Perhaps. But it has one very practical application: By attempting to write a new decoder based on the official bitstream documentation, this could serve as a mechanism for validating said spec, something near and dear to my heart.

What is the current state of the spec? Let me reiterate that I’m glad it exists. As I stated during the initial open sourcing event, everything that Google produced for the initial event went well beyond my wildest expectations. Having said that, the documentation does fall short in a number of places. Fortunately, I am on the Webm mailing lists and am sending in corrections and ideas for general improvement. For the most part, I have been able to understand the general ideas behind the decoding flow based on the spec and am even able to implement certain pieces correctly. Then I usually instrument the libvpx source code with output statements in order to validate that I’m doing everything right.

Token Blocker

Unfortunately, I’m quite blocked right now on the chapter regarding token/DCT coefficient decoding (chapter 13 in the current document iteration). In his seminal critique of the codec, Dark Shikari complained that large segments of the spec are just C code fragments copy and pasted from the official production decoder. As annoying as that is, the biggest insult comes at the end of section 13.3:

While we have in fact completely described the coefficient decoding procedure, the reader will probably find it helpful to consult the reference implementation, which can be found in the file detokenize.c.

The reader most certainly will not find it helpful to consult the file detokenize.c. The file in question implements the coefficient residual decoding with an unholy sequence of C macros that contain goto statements. Honestly, I thought I did understand the coefficient decoding procedure based on the spec’s description. But my numbers don’t match up with the official decoder. Instrumenting or tracing macro’d code is obviously painful and studying the same code is making me think I don’t understand the procedure after all. To be fair, entropy decoding often occupies a lot of CPU time for many video decoders and I have little doubt that the macro/goto approach is much faster than clearer, more readable methods. It’s just highly inappropriate to refer to it for pedagogical purposes.

Aside: For comparison, check out the reference implementation for the VC-1 codec. It was written so clearly and naively that the implementors used an O(n) Huffman decoder. That’s commitment to clarity.

I wonder if my FFmpeg cohorts are having better luck with the DCT residue decoding in their new libavcodec implementation? Maybe if I can get this Python decoder working, it can serve as a more appropriate reference decoder.

Update: Almost immediately after I posted this entry, I figured out a big problem that was holding me back, and then several more small ones, and finally decoded by first correct DCT coefficient from the stream (I’ve never been so happy to see the number -448). I might be back on track now. Even better was realizing that my original understanding of the spec was correct.

Unrelated

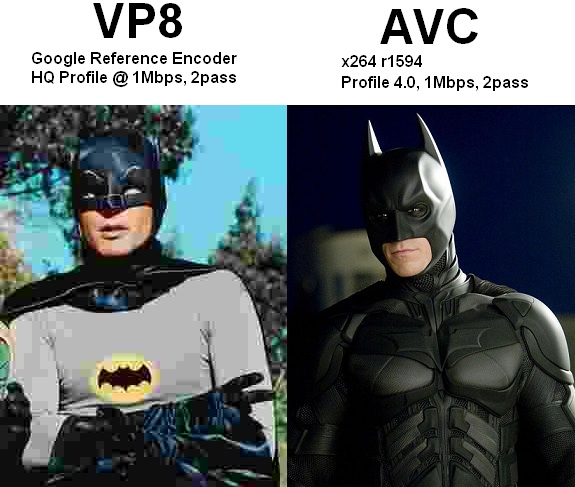

I found this image on the Doom9 forums. I ROFL’d:

It’s probably unfair and inaccurate but you have to admit it’s funny. Luckily, quality nitpickings aren’t my department. I’m just interested in getting codecs working, tested, and documented so that more people can use them reliably.

I think the two biggest catches when I did coeff decode were:

1: No bit is read for the first branch of the token probability tree for tokens immediately after DCT_0; the next token uses the subtree as if a 1 had been read. The spec glosses over this, only mentioning it in describing the context selection (“… because an end-of-block token must have at least one non-zero value before it …”)

2. Figuring out when the left/top predictors for skipped blocks are reset. In particular, Y2 blocks’ non-zero predictors are NOT reset on skipped macroblocks with mode B_PRED or SPLITMV.

It didn’t help that I mistyped one probability of 225 as 255, and completely omitted an entry from the probability table for dct_cat6…

Hopefully they’ll make these clear in the spec from my vague complaint about it.

Anyway, decode_block_coeffs() and decode_mb_coeffs() in my tree should be easier to understand than libvpx (at least until I do a manually inlined+unrolled version too) if you want to look at them : http://github.com/yuvi/ffmpeg/blob/51dcb594835fd3e7413d539f574c6477401d5af8/libavcodec/vp8.c#L713

@dconrad: Did you actually retype all the probability tables instead of copy/pasting them from the spec? I ported them to Python by copy/pasting and changing the braces to brackets.

We’ll have to stick together through this; I have a feeling we’re 2 of the few people who are endeavoring to complete this task.

If I didn’t have so many other things going on, I’d try pitching in. I don’t have much experience with vcodec code, but making code easier to understand seems to be an addiction.

My thought: Run the macro processor on the coeff code, compile against that. Iteratively simplify/reduce/clarify/abstract/rewrite the resulting C code in as small steps as possible, verifying that they compile and produce the same output each time. Eventually, you’ll have a clear picture of how the code is structured, presented in pure C.

Every now and again, I have to do this for old code bases at work, in order to get anywhere at debugging them. (It usually results in performance improvements, too, but that’s a result of subsequent reoptimization.)

Something else to think about…How much of that VP8 codec code is utilizing undefined behavior? I don’t think I need to elaborate on the gotchas that will present while you’re trying to understand the code and reimplement in another language.

Er, by “produce the same output each time”, I was referring to run-time output, not compiler object file output. :)

@Michael: Those are some creative approaches. Fortunately, almost immediately after I posted the article, I figured out the problem and got over the hump that had me stuck for a few days.

Pingback: See's Message » Google改进VP8编解ç 器

Have you finished you python decoder ? I also get stuck in decoding DCT coefficients when trying to implement my own gpgpu-based decoder.